- Pipe

- Bridges & Structures

- Walls

- Stormwater Management

- Erosion Control

- Start a Project

- Knowledge Center

- Technical Documents

Being buried under dirt might be one of the most common fears people have. However, if you were a stormwater management device (or the human designing it) you might begin to worry about not having enough dirt over your system. How much is enough earth cover? Can I put too much? What backfill materials are suitable? Whether you are designing a hydrodynamic separator, a filter, or any of the diverse options of detention and retention systems; You need to know the answers to these questions, and we are here to help.

As urban development intensifies and stormwater regulations grow more complex, engineers are challenged to design treatment systems that perform effectively within constrained site layouts. Engineered biofiltration has long been a proven solution, with vertical-flow systems like Filterra® delivering reliable, high-rate performance in compact sites. Building on that foundation, Contech’s Modular Wetlands® Linear (MWL) uses horizontal-flow technology that addresses hydraulic and spatial challenges, giving engineers even greater flexibility in how they design for water quality.

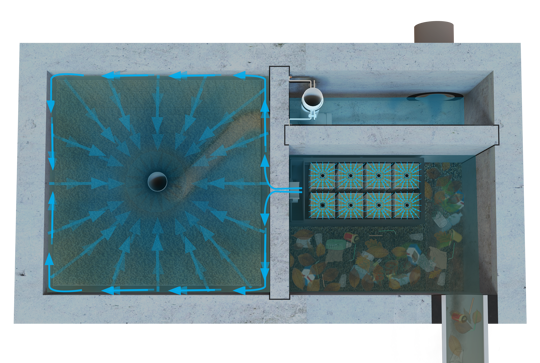

This article explains why underground detention systems need pretreatment to stay effective over time, and how hydrodynamic separators protect storage volume by removing sediment, trash, and floatables before they enter the system. It highlights how proper HDS sizing can extend maintenance intervals, reduce cleaning costs, and preserve hydraulic performance, making pretreatment one of the most cost-effective ways to protect underground stormwater infrastructure.

As land prices rise and project footprints shrink, developers and engineers are forced to rethink traditional stormwater management. Gone are the days when an open detention basin in the back corner of the site was the default. The trend is shifting underground, and for good reason. Corrugated metal pipe (CMP) detention and infiltration systems provide a solution that helps maximize usable land, comply with regulations, and reduce long-term maintenance costs.

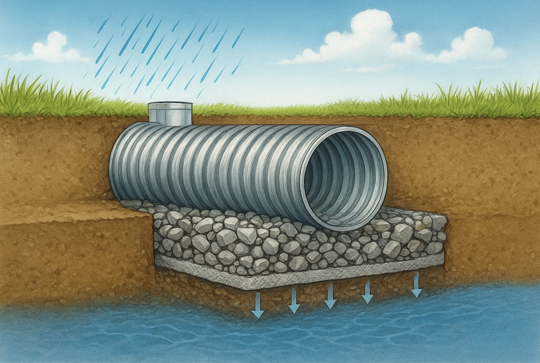

CMP-based infiltration systems offer an efficient way to manage stormwater by recharging groundwater underground, but proper design is essential for long-term performance. Key best practices include site-specific infiltration testing, using clean angular stone, geotextile wrapping, staying above the water table, and optimizing storage depth to maximize system effectiveness.

Stormwater design isn’t always straightforward. Between utility conflicts, easement restrictions, odd property lines, and topography, most sites require more than a one-size-fits-all solution. That’s where the flexibility of corrugated metal pipe (CMP) comes in.

When it comes to underground stormwater systems, longevity depends heavily on material selection. While all corrugated metal pipe (CMP) systems offer structural integrity, the right coating ensures they perform for decades in the specific environmental conditions of your site. This article breaks down the main CMP coating types, their performance in different soil and water conditions, and when to use each.

Stormwater runoff doesn’t just result in pollution and flooding; it can silently overheat rivers and streams, threatening fish and water quality. Learn how underground detention systems offer a hidden but powerful solution to this overlooked environmental challenge.

Infiltration systems are a cornerstone of modern stormwater management—but are we designing them to last? As their use expands, especially underground, new research raises concerns about sediment buildup, maintenance challenges, and long-term performance. This article takes a closer look at whether our current practices are protecting infiltration capacity—or quietly eroding it.

Stormwater parks are an innovative solution for managing urban runoff while providing recreational and ecological benefits to communities. However, in dense urban areas, the large amount of land traditionally required for these parks poses a major challenge due to high costs, limited space, and competing land uses. This blog explores how high-rate bioretention systems offer a compact, efficient alternative that enables the development of successful stormwater parks even in space-constrained environments, with real-world examples from Washington State.

SUBSCRIBE

The Stormwater Blog is featured on the Contech Site Solutions Newsletter. Get insights, news, tip & tricks delivered directly to your inbox.